Why Adult Content Has No Place Next to a Kids' Platform

We have heard a recurring concern from adults flagged by Rotector: that their involvement with adult content adjacent to Roblox should not count against them. Some are in adult-only Discord servers. Others argue that Roblox's own 17+ and 18+ experiences legitimize adult participation. A few point to user-created "condo" games and say they are separate from the wider platform.

The argument comes in different forms, but the core is the same: "I'm an adult doing adult things. Why does that matter?"

This post explains why it matters. Our reasoning is based on child safety principles and documented evidence, not individual judgment.

Roblox Is a Children's Platform

Roblox's own age-verified data tells the story. Among verified daily active users, 35% are under 13, 38% are between 13 and 17, and only 27% are adults, across a platform with over 144 million daily active users.[1]2

Roblox's February 2026 blog post "Moving Beyond Self-Reported Age" reports age-checked cohorts for the first time: 35% under 13, 38% ages 13-17, and 27% adults. These numbers replaced the old self-reported data. Source: about.roblox.com

Roblox's February 2026 blog post "Moving Beyond Self-Reported Age" reports age-checked cohorts for the first time: 35% under 13, 38% ages 13-17, and 27% adults. These numbers replaced the old self-reported data. Source: about.roblox.com

Even these numbers likely undercount children. Parents have been completing verification on behalf of their kids, and age-verified accounts are being resold online. The real proportion of children on Roblox may be higher than 73%.

Meanwhile, Roblox's trust-and-safety spending declined 2% year-over-year even as the platform grew. The October 2024 Hindenburg Research report found 38 groups openly trading child sexual abuse material on Roblox, one with 103,000 members.14

Hindenburg Research's October 2024 report on Roblox.14

Hindenburg Research's October 2024 report on Roblox.14

Key findings: 900+ "Jeffrey Epstein" username variations active in children's games, 38 groups trading child exploitation material, and accounts with names like "@igruum_minors" earning badges for spending time in kids' games.

Key findings: 900+ "Jeffrey Epstein" username variations active in children's games, 38 groups trading child exploitation material, and accounts with names like "@igruum_minors" earning badges for spending time in kids' games.

Nearly three out of four users on this platform are children. That context matters for everything that follows.

The Documented Grooming Pipeline

Law enforcement has identified a consistent pattern: initial contact on Roblox, migration to Discord or Snapchat, escalation to exploitation or abduction.

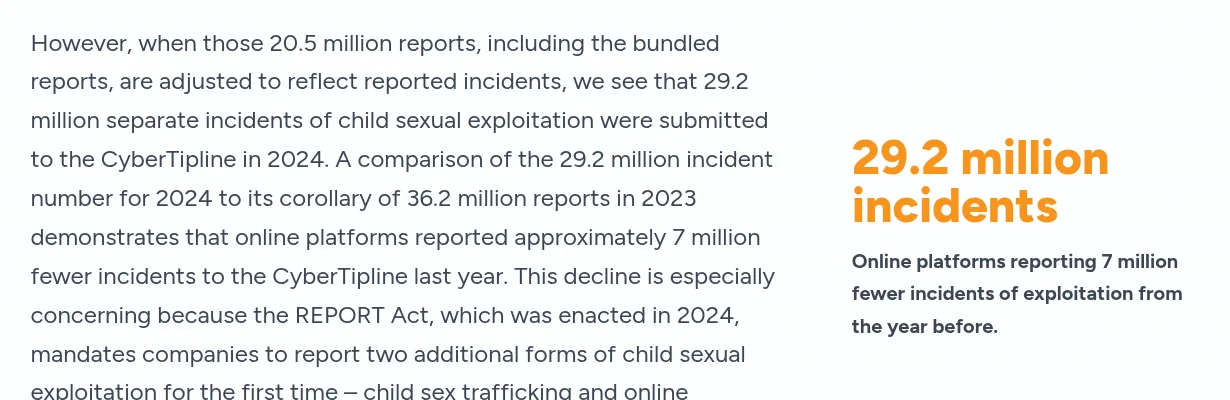

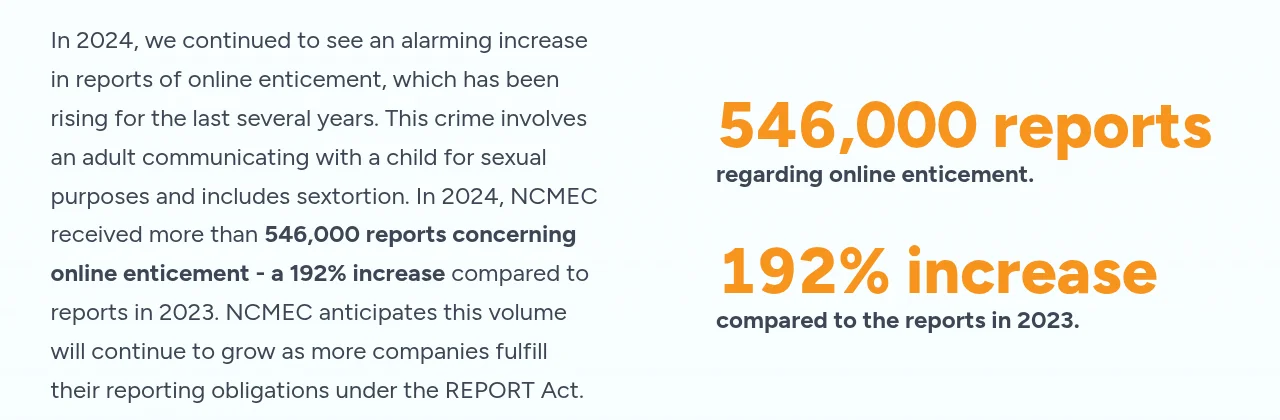

At least 30 people have been arrested in the United States for abducting or sexually abusing children they met on Roblox.[3]4 Roblox's own reports to the NCMEC CyberTipline rose from 675 in 2019 to 24,522 in 2024, a 3,532% increase.5 NCMEC enticement reports surged 192% in a single year to over 546,000 in 2024.6

NCMEC's 2024 CyberTipline Report documents 29.2 million separate incidents of child sexual exploitation reported by internet platforms. Source: missingkids.org

NCMEC's 2024 CyberTipline Report documents 29.2 million separate incidents of child sexual exploitation reported by internet platforms. Source: missingkids.org

Online enticement reports to NCMEC surged 192% in a single year. Over 546,000 reports in 2024 alone.

Online enticement reports to NCMEC surged 192% in a single year. Over 546,000 reports in 2024 alone.

The cases follow the same pattern. Sebastian Romero posed as a child on Roblox and is believed to have abused at least 25 victims.7 Anthony Borgesano posed as a 15-year-old to lure a child under 12 he met through Roblox, sentenced to 25 years.8 Matthew Naval, 27, met a 10-year-old on Roblox and Discord before allegedly abducting her more than 250 miles from her home.9

Adult content adjacent to Roblox, whether condo games, adult Discord servers, or the platform's own 18+ features, creates the environments that predators exploit to reach children.

Why Adult Content Adjacent to a Kids' Platform Is Dangerous

Most adults on Roblox are not predators. Most people engaging with adult content adjacent to the platform have no harmful intentions. But child protection systems are not designed around the majority. They exist because of the minority who pose a risk.

The arguments we hear take different forms depending on the type of adult content. Some say adult-only Discord servers are legitimate hangout spaces. Others point to Roblox's own 17+ and 18+ experiences as evidence that adult content is sanctioned by the platform itself. And user-created condo games, while widely condemned, are sometimes defended as a separate problem unrelated to the broader Roblox ecosystem.

All of these arguments share a common flaw: they evaluate adult content in isolation rather than in the context of where it exists. People can enjoy adult content featuring characters from adult-oriented games or media without raising child-safety concerns. But this is Roblox. Creating pornographic content derived from a platform predominantly known to be played by children is a problem regardless of whether any particular child is exposed to it.

Situational Crime Prevention, a criminology framework, stresses reducing harm by restricting opportunities rather than identifying bad actors in advance. For child abuse to occur, three elements are required: a vulnerable child, an enabling environment, and opportunity. Adult content adjacent to a children's platform, in any form, meets all three conditions.

This is why DBS checks exist in the United Kingdom. Why background checks are required for anyone working with children in the United States. Why adults cannot access unsupervised areas in schools or daycare centers, regardless of their intentions. The question is never "is this particular adult a threat?" The question is "does this environment create opportunities that a threat actor could exploit?"

We do not allow unsupervised adult access to playgrounds, schools, or youth organizations based on individual claims of good intent. A volunteer at a children's camp goes through background checks not because we assume they're dangerous, but because the system is intended to reduce opportunity regardless of character. Nobody takes this personally, because everyone understands that the safety of children depends on structural protections, not trust.

When adult and child spaces share infrastructure such as accounts, messaging systems, friend lists, or brand identity, they create pathways between predators and potential victims. Roblox's 18+ experiences share the same account system, friend lists, and messaging as the rest of the platform. Condo games exist within Roblox's game discovery system. Adult Discord servers built around Roblox communities put adults and children one click apart. The boundary between "adult space" and "children's space" is not just structurally porous; it is actively breached. Explicit images from condo communities are uploaded as decals and clothing textures, bypassing moderation filters and appearing directly in children's games. Content originating in these adult spaces often ends up on the Roblox marketplace. The claim that adult content exists in a separate space collapses when that content is constantly re-imported into the platform children use.

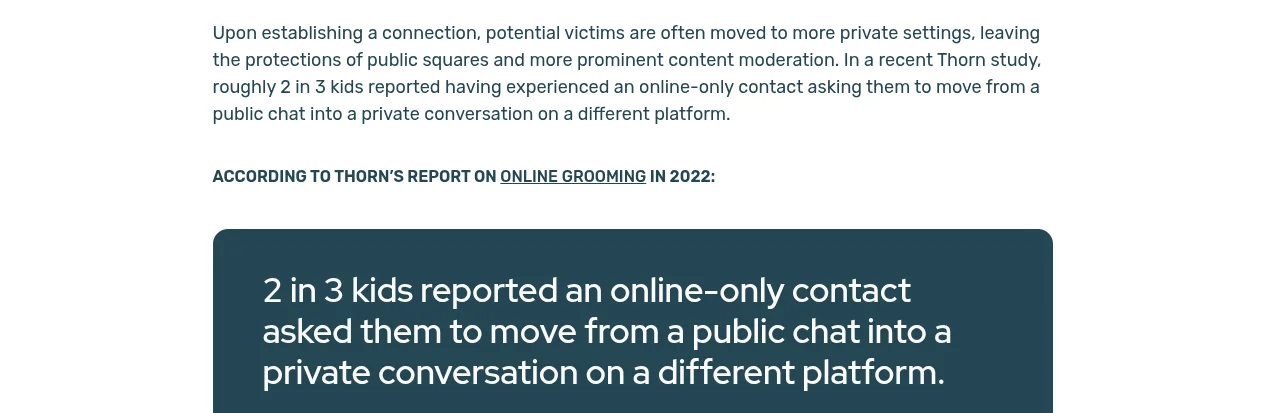

WeProtect Global Alliance's 2023 Global Threat Assessment found that in social gaming environments, a high-risk child grooming situation can develop in as little as 19 seconds, with an average of 45 minutes in social gaming environments.10 Thorn's 2022 Online Grooming report found that nearly 4 in 10 minors have been approached by someone attempting to befriend and manipulate them, and roughly two in three children reported an online contact asking them to move to a private conversation on a different platform.11

Thorn's research center documents how online grooming and sextortion have become systemic threats. Source: thorn.org

Thorn's research center documents how online grooming and sextortion have become systemic threats. Source: thorn.org

Thorn's 2022 Online Grooming report: two in three children reported being asked by an online contact to move to a private conversation on a different platform.

Thorn's 2022 Online Grooming report: two in three children reported being asked by an online contact to move to a private conversation on a different platform.

The five principles of Situational Crime Prevention are: increase the effort required by offenders, increase the risks of detection, reduce the rewards, remove rationalizing excuses, and reduce provocations. Adult content adjacent to children's platforms undermines each of these principles. It lowers the effort required (shared infrastructure provides easy access), reduces the chance of detection (adult presence is normalized), and offers rationalizing excuses ("I was just using the adult section").

The normalization problem deserves particular attention. When adult content is treated as acceptable alongside a children's platform, it shifts what the community considers normal. Condo Discord servers with tens of thousands of members do not appear overnight. They grow because each participant reinforces the idea that this is a reasonable thing to do near a platform full of children. That normalization creates scale, and scale creates opportunity. A condo server with 50,000 members is not 50,000 predators, but it is an environment where predators can operate with minimal friction, surrounded by a large population that has already accepted the premise that adult content adjacent to Roblox is fine. Even adults who never intend to harm a child contribute to the ecosystem that makes harm easier.

There is also a behavioral contradiction worth noting. Many of the people who insist their involvement with adult content is harmless go to considerable lengths to avoid detection: using alt accounts, rotating Discord identities, and avoiding linkable profiles. If the activity were truly harmless, there would be no reason to hide it. The evasion itself signals awareness that the behavior is, at minimum, not socially acceptable on a children's platform.

The Australian eSafety Commissioner's Safety by Design framework reinforces this. Its first principle: the burden of safety should never fall solely upon the user.13 A system that relies on children staying in the "right" space, on age gates working perfectly, or on adults self-policing their behavior places that burden on the most vulnerable participants.

The eSafety Commissioner's Safety by Design framework: "Safety by Design also acknowledges the need to make digital spaces safer and more inclusive to protect those most at risk."13

The eSafety Commissioner's Safety by Design framework: "Safety by Design also acknowledges the need to make digital spaces safer and more inclusive to protect those most at risk."13

Regulators are reaching the same conclusion. Six state attorneys general, from Louisiana, Kentucky, Texas, Florida, Iowa, and Tennessee, have filed lawsuits against Roblox.[15]16 Kentucky's AG called the platform "a hunting ground for child predators." Louisiana's AG called it "the perfect place for pedophiles." Florida's AG filed a 76-page complaint alleging Roblox misrepresented its safety practices.16 The UK Online Safety Act now holds platforms legally responsible for proactively protecting children, with fines of up to 10% of global revenue for failures.17 The regulatory world is moving toward more scrutiny of adult-child proximity on digital services, not less.

Age Verification Doesn't Fix This

A common counterargument is that age-gated spaces address the issue. If a server or experience requires age verification, children cannot access it, so adults in those spaces should not be flagged.

The evidence consistently points in the other direction.

The Center for Democracy and Technology has testified before Congress that age verification systems "continue to raise significant concerns for all users' privacy and free expression rights" and that such mechanisms can be circumvented.12 The Australian eSafety Commissioner has similarly noted that "most technology companies don't really have an accurate picture of the numbers of children on their platforms, or their actual ages."19

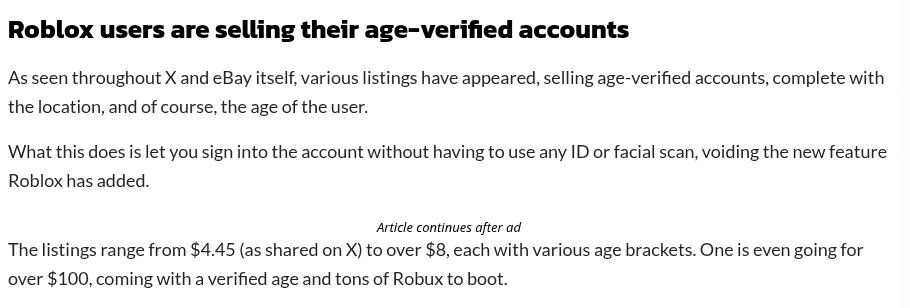

Roblox's own experience proves the point. After implementing facial age estimation, reports emerged of parents completing verification on behalf of children, inadvertently classifying minors as adults and granting them unrestricted access.2 Within days of the rollout, age-verified Roblox accounts were found being sold on eBay for as little as $4.45.18

Within days of Roblox's mandatory age verification rollout, age-verified accounts appeared for sale on eBay starting at $4.45.18

Within days of Roblox's mandatory age verification rollout, age-verified accounts appeared for sale on eBay starting at $4.45.18

If Roblox, in spite of significant investment in trust and safety, cannot consistently verify age on its own platform, then age gates on Discord servers, condo games, or even Roblox's own 18+ experiences do not provide a meaningful barrier.

What Rotector Does About It

It is important to clarify what being flagged actually means and how our detection works.

Rotector does not rely on a single signal. Our system uses multiple detection methods to identify accounts that warrant review:

- Outfit detection: AI scans user avatars and clothing inventories for inappropriate or sexually explicit items, including bypassed fetishwear, see-through clothing, and other content that slips past Roblox's filters.

- Friend network analysis: Accounts with a disproportionate number of flagged users in their friend list are surfaced for review.

- Condo group analysis: We track groups associated with condo communities and inappropriate content. Membership in these groups is a signal.

- Trap game detection: Code-gated honeypot games are advertised inside condo Discord servers. The access code can only be obtained from those servers, so anyone who enters it and gets logged was actively seeking condo content. We wrote a full explanation of how this works.

- Condo game monitoring: We monitor players in real condo games to identify accounts actively participating in inappropriate content on Roblox.

- Discord server connections: Services like Bloxlink and RoVer link Roblox accounts to Discord servers. Connections to servers matching patterns associated with adult or inappropriate content are flagged.

- Profile analysis: Usernames, display names, and bios are checked for patterns associated with predatory behavior or inappropriate content.

Each of these signals carries weight, but none alone determines a final outcome. Being flagged means an account will be reviewed by a human, not that someone is labeled a predator or banned. A flag is a starting point, not a verdict.

We recognize that detection systems are not perfect. A formal appeal process is available for anyone who believes they were flagged incorrectly. Appeals are taken seriously, and users have been cleared when evidence supports their case.

However, we will not remove adult content connections as detection signals. Research, criminal cases, law enforcement data, and guidance from major child safety organizations all indicate that adult content adjacent to children's platforms creates structural risk. Whether that adult content takes the form of Discord server memberships, condo game participation, inappropriate outfits, or suspicious group activity, each provides a meaningful signal. Ignoring any of them because they occasionally flag innocent users would be negligent.

As a small team focused on protecting children on a platform where the company has not met safety expectations, we prefer to flag more accounts and clear innocent users through review rather than risk missing those that require intervention.

Why This Matters

Consider it from a parent's perspective. They install our extension to check their child's friend list. They see a warning next to an account linked to adult content adjacent to Roblox. That parent now has information they can act on. Maybe it's nothing. Maybe it's something. But they get to make that call.

The data, arrests, lawsuits, and the outcomes reflected in these statistics are the reasons this system exists. Major child safety organizations, from NCMEC to Thorn to the NSPCC, have consistently warned that mixed-age digital environments create structural risk to children. Our approach reflects that guidance.

References

- Roblox, "Roblox Age Checks Required to Chat," January 2026. about.roblox.com

- Roblox, "Moving Beyond Self-Reported Age," February 5, 2026. about.roblox.com

- Bloomberg Businessweek, "Roblox's Pedophilia Problem," 2024. bloomberg.com

- Aggregate of Bloomberg, NBC, AP, and DOJ reporting on Roblox-linked arrests through 2025. Summary at en.wikipedia.org.

- NCMEC CyberTipline Reports by Electronic Service Provider, 2019-2024. missingkids.org

- NCMEC, "2024 CyberTipline Report," enticement section. missingkids.org

- KRON4, "Roblox is 'hunting ground for child sex predators,' new lawsuit claims." kron4.com

- NBC Miami, "South Florida family sues Roblox and Discord after daughter is kidnapped by Roblox predator." nbcmiami.com

- NBC News, "California man accused of kidnapping 10-year-old he met on Roblox." nbcnews.com

- WeProtect Global Alliance, "Global Threat Assessment 2023." weprotect.org

- Thorn, "Online Grooming: Examining Risky Encounters Amid Everyday Digital Socialization," 2022. thorn.org

- Kate Ruane, CDT, Testimony before U.S. House Committee on Energy and Commerce, December 2, 2025. congress.gov

- Australian eSafety Commissioner, Safety by Design framework. esafety.gov.au

- Hindenburg Research, "Roblox," October 2024. hindenburgresearch.com

- State AG lawsuits against Roblox: Louisiana AG (nbcnews.com), Kentucky AG (cbsnews.com), Texas AG (texasattorneygeneral.gov), Iowa AG (iowaattorneygeneral.gov), Tennessee AG (wsmv.com)

- Florida AG, Lawsuit against Roblox, December 2025. myfloridalegal.com

- UK Online Safety Act 2023. legislation.gov.uk

- Dexerto, "Age-verified Roblox accounts are already being sold on eBay," January 2026. dexerto.com

- Australian eSafety Commissioner, "eSafety report shows widespread underage use of social media," February 2025. esafety.gov.au

Interested in how we detect condo content on Roblox? Read our breakdown of how trap game detection works. Questions or feedback? Join our Discord community.